Chatbot Training Guide

Basic Configurations

If you train the chatbot using your own data, there are several fundamental settings to achieve a high-performing chatbot that can be embedded in your website. This setup will ensure the chatbot meets your expectations.

Configuring the Chatbot

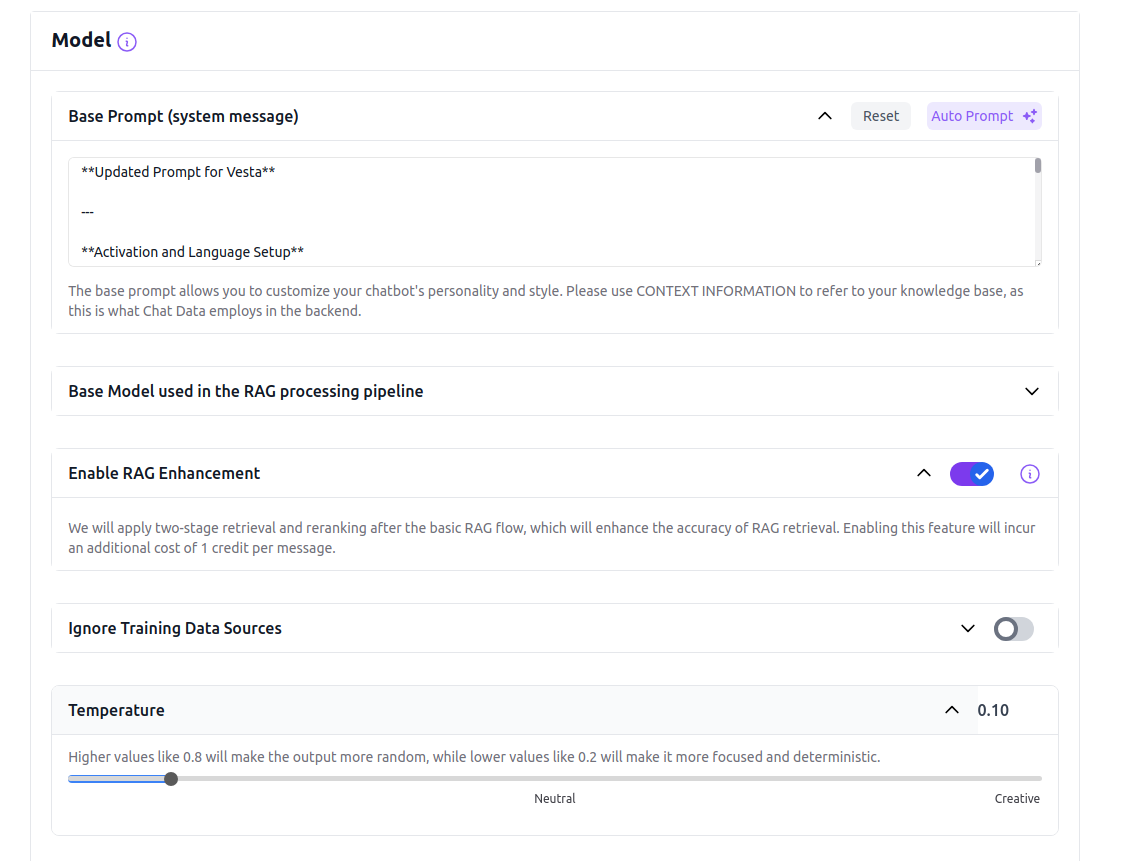

To achieve the desired performance from your chatbot, it's crucial to configure it appropriately. The three essential parameters to set up for your new chatbot are base prompt, OpenAI model, and temperature.

These parameters can be adjusted on the Model Settings page of your chatbot. Access this page via the URL formatted as https://www.chat-data.com/chatbot/{chatbotId}/settings/model.

Base Prompt

This is the most important parameter among the three. If you have the option to configure only one aspect of your chatbot, prioritize setting the base prompt. It sets the overarching tone and communicates your expectations to the chatbot. Without a properly configured base prompt, your chatbot will revert to the default setting, potentially leading to responses that are unexpected or off-target. In the base prompt, includeCONTEXT INFORMATION to represent the uploaded data if you are using the custom-data-upload backend, as this is what we employ in our backend.

GPT-3.5

For the GPT-3.5 model, keep the base prompt straightforward. Complex logic might be overlooked due to the model's limitations. Utilize the few-shot prompting technique to tailor your base prompt specifically for the GPT-3.5 model, which benefits from multiple examples to enhance learning.

GPT-4.0

The GPT-4.0 model accommodates more intricate logic, allowing for sophisticated interactions.

Consider these four strategies when setting up your base prompt:

Adjust the bot's personality

If you desire a more laid-back and amiable demeanor for your bot, you can experiment with wording such as: "Present yourself as a friendly and easygoing AI Assistant."

Modify how the bot handles unknown queries

Instead of responding with a generic "Hmm, I am not sure," consider instructing the bot to say something like, "I apologize, but I don't possess the information you seek; kindly reach out to our customer support."

Direct its focus towards specific topics

If you envision your bot as a specialist in a particular field, you can incorporate instructions like, "Specialize in providing information about environmental sustainability as an AI Assistant."

Establish its limitations

If you wish to restrict the bot from offering certain types of information, you can specify, "Avoid providing financial advice or information."

OpenAI Model

You have the option to select either the GPT-3.5 or the GPT-4.0 model for processing the uploaded data if you opt for the custom-data-upload, medical-chat-human or medical-chat-vet backends. The GPT-4.0 is much better at following the base prompt and not hallucinating, but it's slower and more expensive than the GPT-3.5. If accuracy is critical, especially in reflecting the uploaded data in responses, upgrading to the Standard plan and selecting the GPT-4.0 model is advisable.

Temperature

The temperature setting affects the chatbot’s response style, determining whether it is more deterministic or creative. For high accuracy, particularly when the chatbot must adhere closely to the uploaded data, it is recommended to keep the temperature at 0. This setting prevents the chatbot from deviating too far from the provided information with creative interpolations.

Ignore Your Uploaded Data

To create a generic chatbot that relies solely on the GPT-3.5 or the GPT-4.0 models, enable the Ignore Uploaded Data Source option. Activating this option ensures that the chatbot operates using only the selected base model (GPT-3.5 or GPT-4.0) to generate responses based following your base prompt.